Bill C-26: A Review

On December 1st, in the House of Commons, Bill C-26 went up for second reading. Hon. Marco Mendicino, the minister of public safety, began his speech talking about the importance of cybersecurity in our current age. The argument against Hauwei (w.r.t 5G equipment ban) was still fresh on his mind when he made the appeal that the Government of Canada needs to take more action around these issues. Here are some quotes from the minister’s speech:

The objectives of Bill C-26 are twofold. One, it proposes to amend the Telecommunications Act to add security, expressly as a policy objective. This would bring the telecommunications sector in line with other critical infrastructure sectors.

The changes to the legislation would authorize the Governor in Council and the Minister of Innovation, Science, and Industry to establish and implement, after consulting with the stakeholders, the policy statement entitled “Securing Canada’s Telecommunications System”, which I announced on May19, 2022, together with my colleague, the Minister of Innovation, Science and Industry.

…

The second part of Bill C-26 introduces the new critical cyber systems protection act, or CCSPA. This new act would require designated operators in the federally regulated sectors of finance, telecommunications, energy and transportation to protect their critical cyber systems. To this end, designated operators would be obligated to establish a cybersecurity program, mitigate supply chain third party services or product risks, report cybersecurity incidents to the cyber centre and, finally, implement cybersecurity directions.

So the question becomes, what does Bill C-26 actually do, and is it actually a good idea? Let’s dive in

What does Bill C-26 Actually Say?

As the minister stated in his speech, the bill is broken down into 2 parts. The first part amends the telecommunications act and gives the government broad powers to control how telecommunications service providers conduct their business. Effectively, the minister or the Governor in Council can order/direct a service provider to “…do anything or refrain from doing anything…” if they are of the opinion that the order is necessary to “…Secure the Canadian telecommunications system, including against the threat of interference, manipulation or disruption…”. The bill goes on to elaborate on what these orders could be, including:

- Prohibiting the use of any product of service

- Requiring the removal of any product or service

- Imposing conditions on the use of any product or service

- Prohibit the service provider from entering into any service agreements w.r.t products/services

- Requiring the termination of any service agreements

- Prohibiting the upgrade of any product/service

- Require that procurement plans for product/services be subject to a review process

- Require that service providers develop a security plan in relation to its services, networks, and facilities

- Require that assessments be conducted to identify vulnerabilities

- Require that mitigation steps be taken against known/identified vulnerabilities

- Require that service providers implement specific standards

There are also provisions in the bill that allow for secrecy of the orders. While, in general, orders of this nature need to be published in the Canada Gazette, the order may withhold that information from the public, and enforce non-disclosure on members of the service provider (or other) with knowledge of the order.

Part 2 focuses on the security of “critical systems” within Canada. Taking very much the same tone as part 1, it applies to “vital services and vital systems” currently defined as:

- Telecommunication services

- Interprovincial / international pipeline/power line systems

- Nuclear energy

- Transportation systems within the legislative authority of Parliament

- Banking systems

- Clearing and settlement systems

It effectively requires the following of organizations in scope:

- That they establish a cybersecurity program

- That they report their program to the appropriate regulator for their area

- That they implement and maintain their cybersecurity program

- That they conduct, at least annual, reviews of their program

- That they notify the regulators of material changes to their program, with a focus on supply-chain and 3rd party risk

- That they report cybersecurity incidents to the relevant regulator

- That they keep records on the ongoings of their cybersecurity program

Further, like part 1, organizations in scope must abide by orders that can be made by either the Governor in Council or the relevant regulator/Minister. From the bill:

"…may, by order, direct any designated operator or class of operators to comply with any measure set out in the direction of the purpose of protecting a critical cyber system…"

There are also clauses in the bill that refer to confidential information that is passed to/from the Government and how that information is supposed to be handled. Lastly, there are fines for in-scope individuals/organizations (both part1 and part2) for non-compliance with the bill and/or orders issues by authorized parties.

My thoughts

The “law” side of things

Most of the online debate around this bill is not directed against the fundamental tenants of the bill, but rather the process by which orders can be made, the breadth of what the orders can contain, and the lack of oversight/recourse against the orders. Not being a lawyer, I’ll have to defer to others to bring these arguments into scope. Here are some resources:

- https://citizenlab.ca/2022/10/a-critical-analysis-of-proposed-amendments-in-bill-c-26-to-the-telecommunications-act/

- https://ccla.org/privacy/fix-c-26-cybersecurity-bill-is-short-on-rights-protections-and-accountability/

Distributed Risk Management

I think it is important to take a step back and understand how most organizations undergo risk assessments (specifically related to security). In the typical scenario, organizations would undergo risk/threat assessments at the highest levels of management. They would effectively work through known “lists” of risks/threats and try to understand the effect that this risk/threat would have on their organization. They would attempt to quantify a likelihood and an impact based on each risk. Based on this risk assessment, the organization would then work to create compensating controls to help decrease the likelihood or impact of a potential risk.

As an example, let’s say that an organization identifies that a changing regulatory landscape is a risk to the organization. If regulations change, they will have to do a bunch of work to reform their people/process/technology to meet the new regulations. In response to this, they may take on the following compensating/mitigating controls:

Example Control: Identify an individual/team responsible for keeping a pulse on the regulatory landscape

The thinking here would be obvious; if the organization has as much lead time as possible to changes, they can take appropriate time required to react in meaningful ways, and therefore reduce the impact on the business.

You can take this approach to a few other things organizations could do. For example:

- Set internal standards to high-water marks of regulations across the world, hoping that as regulations change locally, they won’t exceed the high-water mark already set.

- Pay for lobbying to keep regulations at a minimum in the business area.

- Create strong processes that support change to the environment and run those processes at a frequency that allows for the organization to react appropriately.

- Etc.

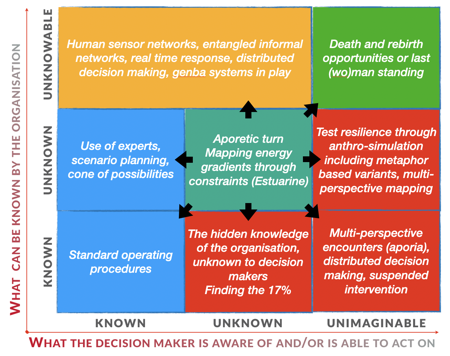

This is all well and good when the risks, impacts, and likelihood are all “knowable” to the organization. But what if that knowledge is unknowable, or worse, unimaginable to the organization? How do you then support decisions in this context?

Source: https://thecynefin.co/decision-support-in-context/

Source: https://thecynefin.co/decision-support-in-context/

Let’s take an example that the Government of Canada is made aware (through intelligence signals) of a risk/threat to Canadian organizations, particularly those deemed critical. Based on this information, this may be a new risk/threat that needs to be addressed, or new information may change the impact/likelihood calculations that organization should make. At this point, the following questions arise:

- How does the Government of Canada influence the decisions that organizations make with respect to the risk/threat identified?

- How does the Government of Canada ensure effective controls are implemented across the industry at large?

Now, you may not trust the Government to always make the right call here, but surely there are situations where the safety/security of Canadians is at risk and action needs to be taken. You could argue that secrecy is required here, so how then should the act of distributed decision-making play out between organizations/industry/Government? How much information should/could be shared to support distributed decision making? Would all those entities make the same risk-based decisions from their context? Who has the final say?

I think this bill makes it clear that the Government has the final say on these issues.

Values of safety/security in Canada

I’ve been in the security industry here in Canada a long time. I’ve helped small and large companies alike achieve security their goals. The one thing that is common is that those security goals are generally not a “value” in the core sense of the word, but rather a “chore” that is imposed on them by regulations they must comply with. In some cases, these regulations are enforced by industry (think PCI-DSS - Payment Card Industry Data Security Standard) and in other cases they are the barrier to entry (like SOC2 - System and Organization Controls 2) which is imposed on the organization by the nature of doing business with another SOC2 compliant organization.

I guess what I’m trying to say is that the security landscape here in Canada can be described as light at best. Because of this, cybersecurity is often not a real concern at executive levels of companies here in Canada, and if it is, it is a check-box exercise where organizations do the minimal amount to pass an audit, often disregarding real security in the process.

Because of this, the need for a cybersecurity framework and standards is sorely needed within the Canadian landscape. In a lot of ways, while I’m glad that part 2 applies to services deemed “vital”, I don’t think that list goes far enough. For example, food/water service providers are missing from that list. In my opinion, I think part 2 should be expanded, in some way, to all organizations that operate within Canada. Creating classes of organizations and then enforcing different rules based on their “vitalness” is likely the right approach here.

Conclusion

In conclusion, Bill C-26 is interesting. It is very clear to see how the bill skews the cybersecurity discussion towards the Government. There are pros and cons to this approach, and it will be interesting to see how it plays out. While I firmly believe that most Canadian organizations don’t care about cybersecurity, putting too much power in the hands of the Government is also bad. For example, what happens when the safety/security of Canadians is compromised in a regulated industry but there was no Government order issued to address the risk sufficiently? Who has liability there? How will organizations use this bill to further downplay their responsibility for cybersecurity in their daily operations?

Looking at this bill in context with Bill C-27 (Update to privacy), it is good to know that the Government is at least doing something to try and address the protection of Canadians. There seems to be a lot of work to do on the “law” side of this bill, which will hopefully be addressed sufficiently during committee.

About Shamir Charania

Shamir Charania, a seasoned cloud expert, possesses in-depth expertise in Amazon Web Services (AWS) and Microsoft Azure, complemented by his six-year tenure as a Microsoft MVP in Azure. At Keep Secure, Shamir provides strategic cloud guidance, with senior architecture-level decision-making to having the technical chops to back it all up. With a strong emphasis on cybersecurity, he develops robust global cloud strategies prioritizing data protection and resilience. Leveraging complexity theory, Shamir delivers innovative and elegant solutions to address complex requirements while driving business growth, positioning himself as a driving force in cloud transformation for organizations in the digital age.