Paths to Application Hosting

In the Beginning

Let’s say you have an idea for a web-based application and found someone to build your web-based application, but you don’t know the next steps required to get it in front of your future customers. You need something to serve the application, but you don’t know what the best way is to go about that.

- You have your idea.

- You or someone else makes the web-application.

- ?

- Your company becomes the next big thing.

I am not going to discuss the mundane financials and staffing details about how to get there, but instead the exciting world of deciding on a web-hosting solution!

Previously you had 2 options:

Option 1: Find a web-hosting provider. You would do some internet searches and find a company who has a set of physical servers and that are in the business of providing website hosting. They would have limited scripting language support and you would get some insecure FTP access to manage your site through.

Option 2: Build your own server.

- You would salvage an old desktop or buy some off-the-shelf hardware, as a proper server is expensive.

- Pay for a dedicated internet connection with a static IP.

- Install the OS and supporting software.

- Patch and replace hardware when needed.

Once you had outgrown that setup, you were heading into uncharted territory. You could then rent server racks in a datacentre or build out your own server room with a whole new set of problems to manage, Hugh upfront costs and staffing demands. With the advent of public clouds, things have now become simplified, and everything can be done without touching any physical hardware or needing to know all the answers.

Current State

Fast forward to current day and there are many more ways to host a web-based application with varying levels of complexity and management. The hardest part is to know what to choose and where to start.

Most of the time you will start out hiring a web/app developer or you end up also being the sole developer. Knowledge on how to setup and maintain a server isn’t something many developers really know or care to know. They know how to setup the application on their computer, and they want to re-create that somewhere more accessible.

Hosting Options

Here are all the different levels and how best to achieve that.

Static Website

Basic HTML and JavaScript website with some client-side JavaScript functionality. This is static as nothing on the server is getting modified and there isn’t any server-side scripting language involved, just file hosting. The site can have some dynamic ability, but it is all running within the browser accessing the website. You don’t need any processing or computing running on your side. Everything happens within the web browser. This would be enough if your application is made up of forms and interacts with 3rd party API services. You can even receive back and display data. You just can’t save anything on your side.

If this fits your requirements, this is the best choice to start with. You would have your website sit in file storage of a public cloud and point your custom domain name to it. This is all anyone really needs for serving up a company website. It is also the most secure as there isn’t anything to exploit. The only requirement is for someone who understands HTML, CSS and JavaScript as it doesn’t come with a fancy management interface and requires some coding knowledge.

Dynamic Website

You are now getting into the realm of a software driven web application using server-side scripting or compiled code. To run the code, your application is written in, you may need webserver or script interpretation software. You will now have to worry about software patching, resource usage, logging, availability, etc.

You will gain a lot more flexibility but inherit a lot more responsibilities. This is the level where you really must worry about maintaining and securing your software and code. Not maintaining your software updates, writing bad code, or using insecure add-ons can make your app an easy target. Not only are malicious actors now well organized, but they have automated tools that can easily scan for vulnerable websites. You don’t need to be famous to be exploited.

Almost all Content Management System (CMS) software such as WordPress operate at this level as you can have a powerful software backend to help build and manage a website. You can use a CMS to add application functionality, but it will still involve you adding custom code. You are better off building your web-application outside of a CMS.

There are many options on how to host your application. It is easier to move from small (hosted app) to large (virtual machine), so it is best to start at the top and move down this list when needed.

Hosting Options:

- App-hosting

They manage the web server and server infrastructure. You just provide the web application code.

- Container

They manage the server infrastructure. This is a layer on top of a shared host server. You are in full control over what software is installed.

- Virtual Machine / Instance

You manage everything other then the physical hardware.

You can still go the route of a physical server running out of your basement, but why would you want to do that to yourself.

Web-app hosting can scale when you grow and provide almost everything you will need. If you need more customization on the software side, then you will need to go with a container. Starting out with a virtual machine (instance) is generally overkill and puts all the server management and security on you. Some of the web-app hosting cloud services are Azure Web App, AWS App Runner, or GCP AppEngine.

Dynamic Website With a Database

Now you’re getting in the realm of storing data. Generally, that data is customer data and not keeping it secure will really get you in hot water and could sink your company. Never store data you really don’t need and one-way encrypt any passwords that you store. Limit who has access to this data.

Dynamic Website With a Database and Worker Processes

This is where you add background processes to handle scheduled tasks such as email campaigns or integrations with partners.

At this level you will probably switch to using a container-based service such as AWS ECS Fargate, or Azure App Service. You may need the processing power of dedicated virtual machines (instances), but generally this should be avoided unless needed. Containers can now scale up to sizes that handle anything but the largest of server sizes.

Cluster of Servers With a Database

Now you are getting in the big leagues and need to worry about adjusting to traffic load, high-availability, and disaster recovery. At this level it is not sustainable to run a high-volume application with a set of manually created hosting resources. You will want a cluster that adapts to changes in web traffic and can heal itself when issues arise.

If your application is broken-out into micro-services, you could explore using Kubernetes. Be warned, there is a steep learning curve in the setup and management of a Kubernetes cluster. You will want dedicated staff who understand the proper setup and maintenance of a Kubernetes cluster if you go this route. Azure offers Kubernetes without the management with the Containers Apps service.

Cluster of Servers With a Database Cluster

If you have made it here, you are now concerned with 24/7 uptime and have contracts with customers and partners requiring long numbers containing the digit ‘9’. You hear terms such as Service Level Agreements (SLAs), Recovery Time Objective (RTO), Recovery Point Objective (RPO), and other initialisms. You probably shouldn’t be reading this article as you or your team have soared beyond what this article can provide you.

Multi-Region Replication

When your customer base is not primarily in a single geographical area, anyone outside the primary area will experience slowness when accessing your application. This is latency caused by the physical distant between them and your hosting service. You will need to investigate ways to improve that latency. A Content Delivery network (CDN) will solve the read performance issues. If writes is the main pain-point, you will have to look into multi-region operation of your application to improve the performance. In doing so, you will need to worry about how data is stored and replicated across multiple servers in multiple physically separated geographical regions. This comes with many of complex problems to solve and will require re-architecting your application and data storage.

Decision Trees

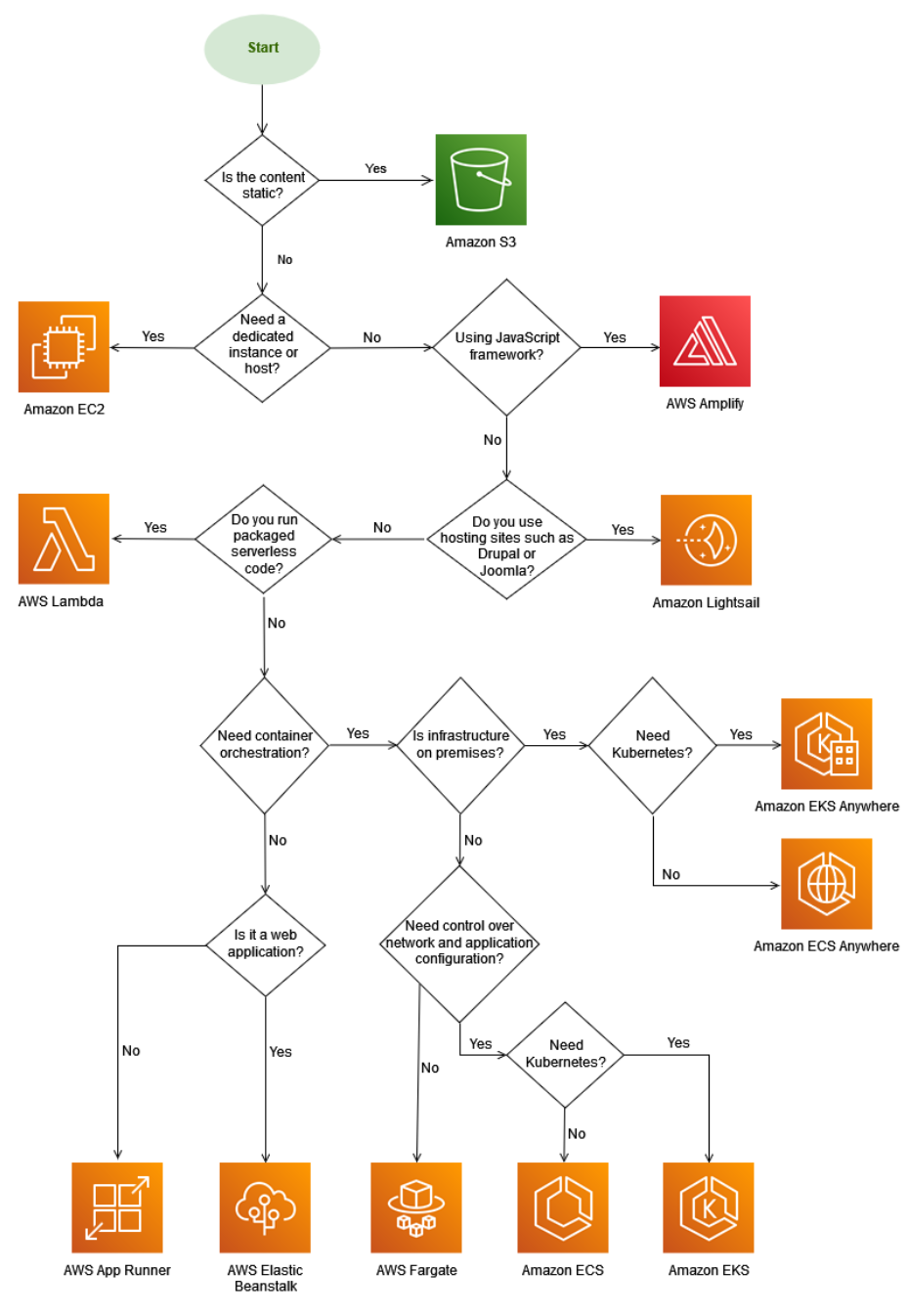

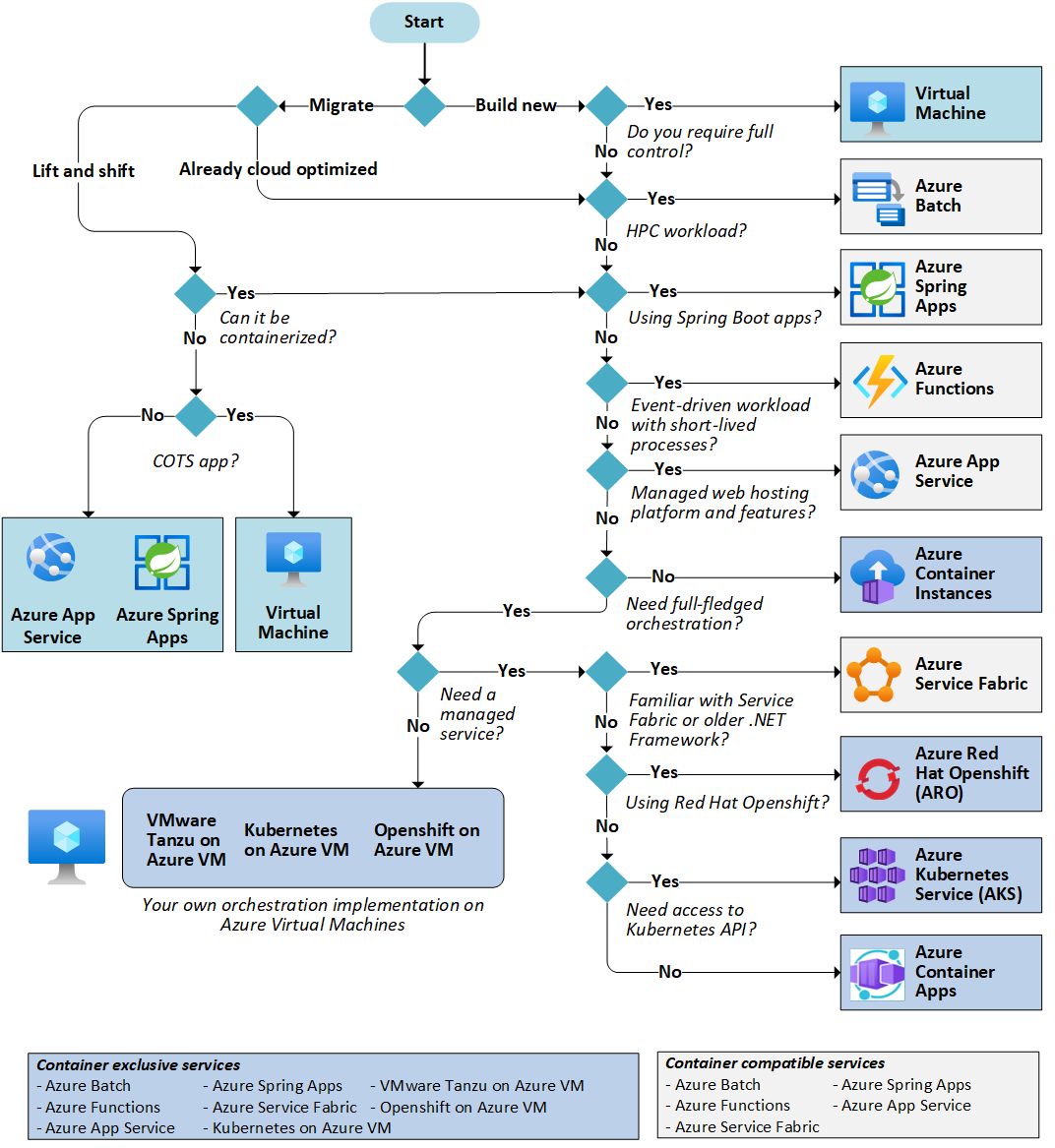

AWS and Azure have made decision tree diagrams for all of there hosting services.

AWS: Choosing a Hosting Service

Azure: Choosing a Hosting Service

Best Practices

Use Containers Instead of VMs/Instances

They allow you to focus on what your application needs to operate. Everything else is external and can be managed by someone else. If your application has several dependencies, they can require some trial and error to setup. With containers, you don’t have a graphical desktop to work with. Everything is command-line, but they will make things much easier to manage in the long run. Size of the final image is important so don’t include stuff you don’t need.

Patching

Patching of self-managed servers, even if automated, is not maintenance free. You will generally need additional monitoring to make sure everything has been patched correctly. If a patch breaks your server, you may be scrambling to build a replacement and will experience an embarrassing outage.

With containers, you build in the patching step into the image creation. The first mistake when using containers is not rebuilding the images and replacing the containers periodically. To keep things up to date, you would run your containers as services and schedule the recreation of the image and replacement of the container to happen weekly. If something breaks, you still have the previous image to fall back on while you troubleshoot. With containers, you can easily test software upgrades and new application versions without running a dedicated testing environment.

Pre-Defined Infrastructure

This is the process of defining your hosting infrastructure in text files and having it deployed programmatically. These files can be templates that are interpreted by deployment software for deploying your infrastructure, they can be scripts that runs a series of commands to do the same thing, or a mixture of both. Once you have them, it is easy to re-deploy your infrastructure and get back up and running fast. They also help as a form of low-level documentation.

Disaster Recovery

With proper disaster recovery planning, if something goes wrong, your downtime is minimal, and the anxiety of a potential disaster is greatly reduced. Once you have your infrastructure templated and your data is being backed-up, you can easily kick off the recreation of everything in a new location. Once you have full tested this process, you can work towards automating the failover and making your downtime as small as possible. The last position you want to be in is reacting to a disaster without a plan. Having a plan will eliminate those sleepless nights stressing over the pending doom of a potential disaster.

Documentation and Auditing

Having your infrastructure and application setup in the form of templates or scripts will act as a form of documentation. It might not be readable by everyone, but it is packed full of detail for those who can read it. It can even fill-in as documentation in the even of a security audit. This doesn’t mean human readable documentation shouldn’t be created. You will need additional documentation describing where to find everything, how to deploy the templates/scripts, and they were previously deployed.

Staff Turn-Over

As a business owner or IT manager, if you don’t make time to develop repeatable infrastructure, you are effectively backing yourself into a corner that will get worse over time. At a minimum, you should allow your staff time to properly document how your infrastructure and application are setup. Startup culture breeds agility over dependability. Everyone wears many hats and there isn’t available time for cross-training. If a person with a key set of knowledge leaves on short notice, you will be left with a Pandora’s box that no one wants to touch. If ever something fails, everyone is in a panic, spending days to recover and losing years off their lives. Re-discovering of a current state setup can take a long time to complete and cost a lot more resource hours then it would be spending some time initially. Discovered design decisions may produce more questions than answers.

Conclusion

Everyone starting out starts with a limited budget and had to face the same challenges. The key to becoming the next tech giant is to not grow too big, too fast. That is how you stumble, while everyone is watching. Don’t leave issues until they become problems. The biggest mistake is skipping over the required steps and deciding to deal with them once they become a problem. Many start-ups who are instantly successful end up being too busy focusing on expanding the application’s features and put off expanding the infrastructure to meet the needs of the application. If you leave it too long it will not be a simple upgrade, but a complete overhaul. When it becomes a problem, your staff will be too overloaded to deal with it.

About James MacGowan

James started out as a web developer with an interest in hardware and open sourced software development. He made the switch to IT infrastructure and spent many years with server virtualization, networking, storage, and domain management.

After exhausting all challenges and learning opportunities provided by traditional IT infrastructure and a desire to fully utilize his developer background, he made the switch to cloud computing.

For the last 3 years he has been dedicated to automating and providing secure cloud solutions on AWS and Azure for our clients.